Authority, Not Accuracy, Is What Makes Feedback Work

AI is a good assistant and sometimes even a good copilot. But people are not ready to hand over authority yet, at least in education.

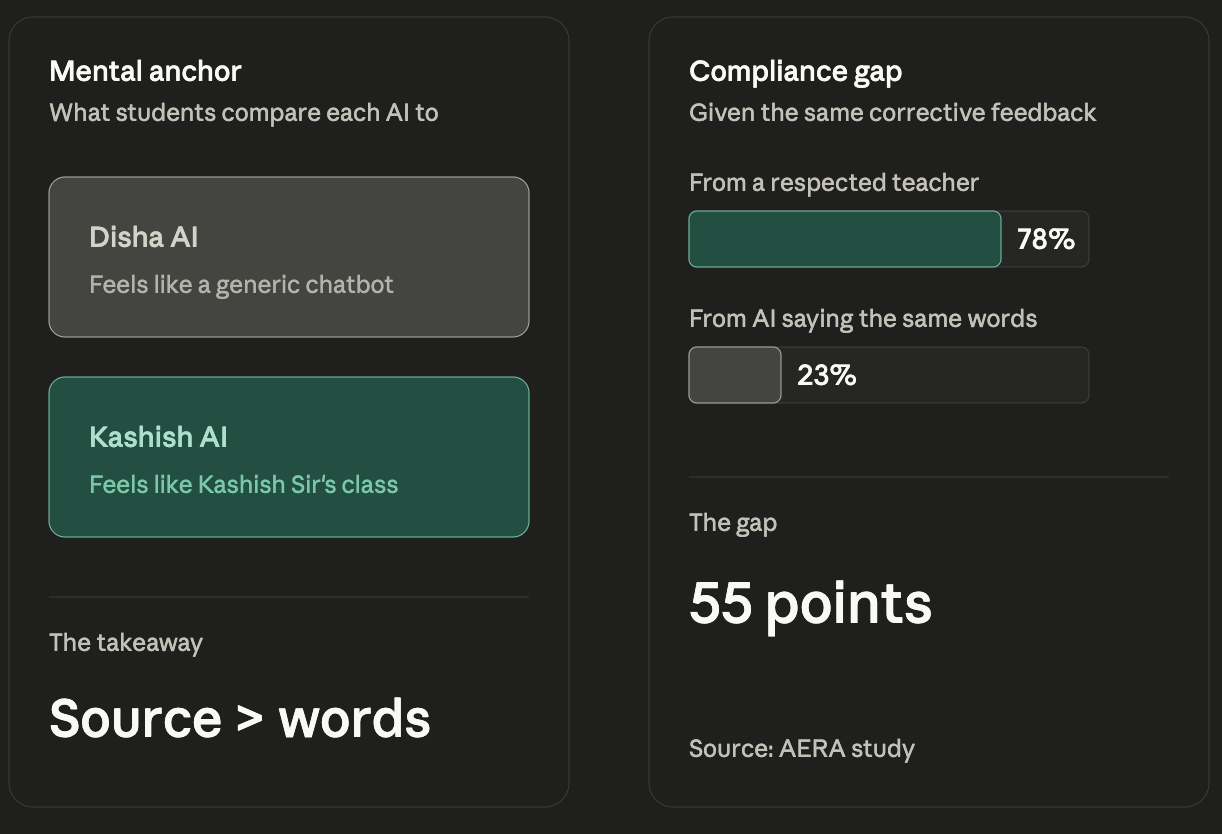

A simple A/B test in Disha AI revealed something we weren't looking for. We were sending personalized reminders to users based on their activity on the platform. Same content, two handles: one from "Disha" (our AI tutor persona) and one from "Kashish" (our co-founder, an IAS officer with 300K followers who students already knew from Instagram).

Users consistently rejected feedback coming from Disha's handle but acted on similar feedback coming from Kashish's. Same words. Different authority. Different outcomes.

When we dug into user conversations, the gap got sharper. Students weren't comparing Disha's feedback to what a great AI could say. They were comparing it to ChatGPT. Just another chatbot. But Kashish's AI-generated feedback? They were comparing that to "what Kashish Sir said during live class." The human anchor reframed the entire interaction.

This lines up with what researchers are finding more broadly. In a study published by AERA, compliance rates dropped sharply when feedback came from AI instead of a respected human teacher. When the teacher said, "This answer is wrong, try again," compliance was 78%. When GPT-4 said the same words, compliance fell to 23%.

The explanation matters less than the authority behind the words.

AI models soften their tone to sound polite, but in doing so, they leak authority. Students know the difference between feedback from someone they trust and feedback from a tool they don't. And that difference isn't about accuracy or even tone. It's about whether the student believes the source has earned the right to push them.

This is a design problem, not a technology problem. A generic AI tutor has no moat. Students already have ChatGPT. They will not adopt another chatbot unless they see a human teacher standing behind it. The trust bottleneck in AI education isn't about better onboarding or smoother UX. It's about who the student believes is talking to them.

My friend Mainak Roy has been shouting this at the top of his lungs for years: the winning model is AI amplifying teachers, not replacing them. AI handles scale: personalized feedback, practice, scaffolding. Teachers handle authority, accountability, and the human push that makes the difference between compliance and disengagement.

Related: Learnings from Building an AI Tutor for India's Toughest Exam covers the full story of building Disha AI, including why 99% of students dropped off and what trust really means in high-stakes test prep.